Artificial intelligence is a field of computer science & engineering focused on the creation of "intelligent machines" that can learn and act like humans. AI has a long and storied history dating back to ancient civilizations. It was in the 20th century that the field truly began to take shape.

One of the earliest recorded references to artificial intelligence can be found in the ancient Greek myth of Pygmalion, in which the sculptor Pygmalion creates a statue so lifelike that it comes to life.

In the Middle Ages, the concept of automata, or self-operating machines, emerged. These early automata were often mechanical devices designed to perform simple tasks like ringing a bell or playing music.

Alan Turing: Computing Machinery and Intelligence

However, it was in the 20th century that the field of artificial intelligence began to take shape. In 1950, computer scientist Alan Turing published a paper titled "Computing Machinery and Intelligence,." The highlight of his article was the "Turing Test". He proposed a method he called the "Turing Test" to determine whether a machine exhibits intelligent behavior.

The Turing Test involves a human evaluator interacting with both a human and a machine. As a result, if the evaluator is unable to distinguish either its man or machine, the machine is said to have passed the test.

A group of researchers and computer scientists, including McCarthy and Marvin Minsky, attended a conference at Dartmouth College in 1956. The topic of the conference was artificial intelligence. Presumably, this conference is the birth of the modern AI field.

During the 1950s and 1960s, AI research focused on trying to

create machines that could perform specific tasks, such as playing chess or

solving mathematical problems. At that time, artificial intelligence was considered a narrow field, and expectations for the future were limited.

In the 1970s and 1980s, research shifted towards creating

more general-purpose AI, or strong AI, which could perform a wider range of

tasks.

Deep Blue Beat Kasparov: Menkind vs. Artificial Intelligence

One of the major milestones in the history of AI occurred in

1997 when IBM's Deep Blue computer defeated world chess champion Garry

Kasparov in a six-game match. Deep Blue was designed specifically to play

chess. Its victory over Kasparov demonstrated the incredible progress that

had been made in the field of AI.

In the 21st century, AI has continued to make significant

strides. Machine learning, a subfield of AI that involves training machines to

learn from data rather than being explicitly programmed, has revolutionized the

field. Today, AI is used in a wide range of applications, from self-driving

cars to language translation to personal assistants like Apple's Siri and

Amazon's Alexa.

As AI continues to advance, it has the potential to transform society in a variety of ways. Some experts believe that AI could be used to solve some of the world's most pressing problems, such as climate change and disease, while others are more skeptical about its potential impact. Regardless, AI is likely to play an increasingly important role in our lives in the years to come.

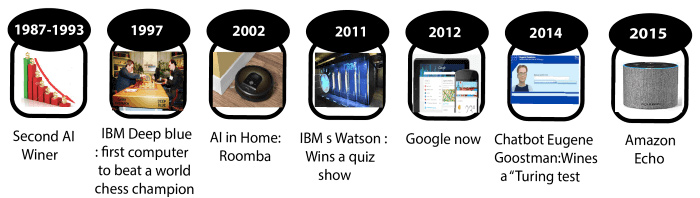

Artificial Intelligence History Milestones from Ancient Ages till Now

Ancient Times:

The ancient Greek myth of Pygmalion tells the story of a sculptor who creates a statue so lifelike that it comes to life. This myth is often cited as one of the earliest recorded references to artificial intelligence.

Middle Ages:

The concept of automata, or self-operating machines, emerges. These early automata are often mechanical devices designed to perform simple tasks like ringing a bell or playing music.

1700s:

The first mechanical clock was invented, laying the foundations for the development of more sophisticated automated machines in the future.

1800s:

The Industrial Revolution took place, leading to the development of machines that could perform tasks faster and more efficiently than humans.

1900s:

The field of artificial intelligence begins to take shape, with researchers exploring the concept of creating intelligent machines that can work and, what's more, learn like humans.

1940:

Warren McCulloch and Walter Pitts published "A Logical Calculus of Ideas Immanent in Nervous Activity." It's a paper that introduces the concept of artificial neural networks. Consequently, these were networks of simple processing units that could learn and adapt to new data.

1950:

Alan Turing publishes "Computing Machinery and Intelligence." This article's "Turing Test" allowed us to measure whether a machine was displaying intelligent behavior; briefly, this test measures whether an expert can distinguish the performance of a machine from that of a human.

1956:

The Dartmouth Conference, a gathering of researchers and computer scientists, is held at Dartmouth College and is considered the birth of the modern field of artificial intelligence.

1961:

MIT researchers Joseph Weizenbaum and Richard Greenblatt create ELIZA. It's an important step for IA because ELIZA is one of the first chatbots capable of carrying out simple conversations with humans.

1971:

The first expert system, called Dendral, was developed at Stanford University. Expert systems are designed to mimic the decision-making abilities of a human expert in a specific domain. Most of all, Dendral help scientist to study hypothesis formation in science.

1980:

The field of machine learning, which involves training machines to learn from data, was founded by Stanford University researchers. Hence it was an important step for machine learning

1997:

IBM's Deep Blue computer defeats world chess champion Garry Kasparov, in a six-game match, demonstrating the progress that has been made in the field of artificial intelligence.

2011:

Apple's Siri, a virtual personal assistant, has been released.

2014:

Google DeepMind's AlphaGo defeats world Go champion Lee Sedol in a five-game match, marking a significant milestone in the field of artificial intelligence.

2016:

Google DeepMind's AlphaGo Zero defeats the previous version of AlphaGo without any human input, demonstrating the ability of AI to learn and improve on its own.

2017:

The term "deep learning" has become widely used to describe a machine learning type involving training artificial neural networks with many layers of interconnected nodes.

2018:

OpenAI's language model, GPT-2, is released, demonstrating the ability of artificial intelligence to generate human-like text.

2019:

OpenAI's language model, GPT-3, is released, surpassing previous models in terms of its ability to generate human-like text and perform a wide range of language tasks.

2022:

AI continues to make significant strides and is used in a wide range of applications, including self-driving cars, language translation, and personal assistants.

Conclusion

Artificial intelligence is a new evolution in human life; furthermore, its a rapidly developing field that is influencing the course of technology as a catalyst for innovation. AI is used in many applications, from self-driving cars and voice-activated personal assistants to medical diagnosis and stock market prediction.

While AI has the potential to bring significant benefits, it

also raises important ethical and societal questions. How do we ensure that AI

is developed and used fairly, accountable, and transparently? How

do we address the potential impact of AI on employment and the economy?

These are important questions that will need to be addressed as AI continues to advance. Overall, it is clear that AI has the potential to bring significant benefits. Still, it is important to approach its development and use with caution and to carefully consider the ethical and societal implications.

Written by: Aykut Alan - ihracatco - 12.2022

COMMENTS